My big idea to create using Max7 is an interactive touch screen that you can draw on. In order to choose the color you will draw in you must say it out loud. A microphone within the screen will recognize the word you have said and, based on the pitch of your voice, will give you a unique shade of that color. Higher pitched voices are assigned lighter colors, while lower pitched voices are assigned darker colors. You will be able to manipulate your voice to get the shade of the color that you would like to draw in.

I started my Max Project by following a tutorial that I thought I could build off of. It showed me how to produce a visual from audio. Considering my idea involved choosing a color to draw in by voice recognition, I proceeded to manipulate the software in the direction I was headed.

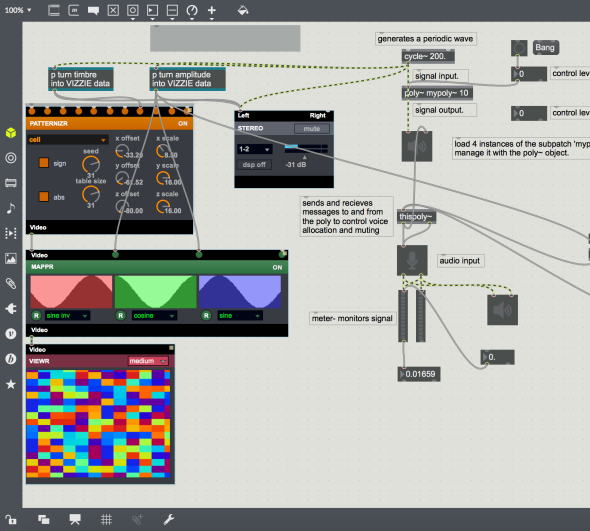

I linked the microphone (audio input) to the poly object and in turn to the stereo, patternizr, and video itself. The noise the built-in microphone in my laptop picks up effects the visual. The meter below the mic monitors the signals my laptop recognizes and the thispoly sub-object receives them. It then sends them to the poly object to control voice allocation. The signal is directed by the poly object to the stereo and imbedded sub-patches. By toggling down in the patternizr you can change the type of pattern (cubes, waves, etc). The patternizr produces images using function graphs; it allows you to stretch out the visual (x and y scale) and play with the speed the video is being shown at. You can change what kind of wave form the sound travels on through the mappr. The mappr remaps the RGB colorspace of the video. You would use this to change the color of the pattern shown.

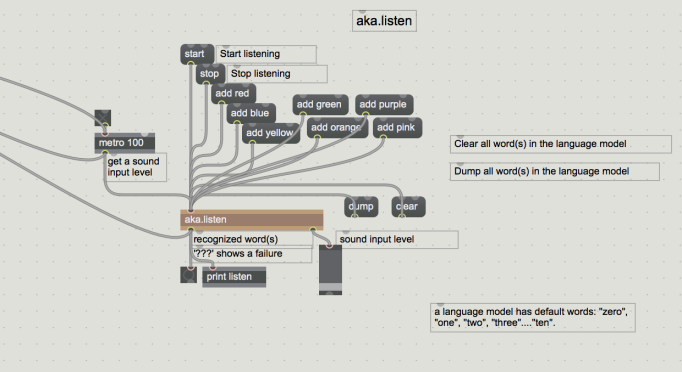

In an attempt to add a speech recognition component to my patcher, I downloaded the aka.listen object. I loaded more words (colors) into the language model. The slider restricts the numeric range of the sound input level and the metro outputs bangs at regular intervals.

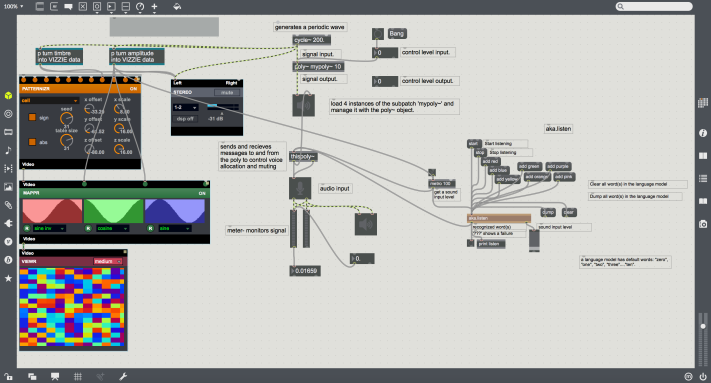

My patcher, still in progress, looks like this. I have tried to alter the aka.listen object and connect it to the other components of the patcher in different ways, but I can’t get it to have any affect on the video except for sometimes halting it. I have moved towards my big idea by creating something that can turn sound waves into video and configuring what would allow voice commands. However, at this point I am struggling to connect the two.

I added the above print window below the aka.listen object in the hopes that I would then be able to tell if the voice commands were being identified. The slider outputs numbers restricted to a specific range and multiplies them by the trigger number above. The “Now recording” and “record” buttons display and send messages and can handle specified arguments (arguments distinguish between different objects).

I demonstrated how the patcher reacts to my voice in the above video. The meter picks up my voice and produces a slightly different elongated noise following each. However, the video doesn’t react any differently to my voice commands than the other sounds it picks up from my internal mic.

My next step will be figuring out how to harness the different frequencies produced and assign them to colors. Then I should be able to make the video react according to voice command and pitch.

If this project ever comes to life it could be used for both recreational and educational purposes. It could serve the same purpose as a game app or as a classroom lesson. People of all ages could interact with the screen while all getting unique results. Elementary age children would benefit creatively from using the screen as well as science classes learning about pitch, frequency, and/or wavelength. This technology could stand alone as an exhibit or work with other inventions as part of a sensory stimulation.